In this page I provide what I hope is either an inspirational or, at least, amusing account of my prehistory leading up to the development of the magic kernel. (Alternative title: “How a colorblind Australian ended up fixing most of the cat photos on the Internet.”)

By September 1983, after five months of working after school a couple of days a week at McDonald’s, I had saved the $620 I needed to buy an Apple II clone (manufactured illegally in Asia) I saw in the Trading Post, the weekly Melbourne newspaper that listed mainly second-hand items for sale. (It was not dissimilar to my saving my pocket money for six months in around 1973 to buy the latest in digital technology then available: a $9.99 four-function calculator; I still remember lovingly keeping the dog-eared K-Mart catalog page for it next to my piles of pen-and-pad mathematical calculations, until the day came to ask my Mum to drive us out on Burwood Highway to the K-Mart to make the sacred purchase.) I bought it from Edward Jozis in South Melbourne, who had started a small company importing computer equipment from Asia, Micronica Australia, run from his home. Incredibly, in 2025, 42 years later, Ed was still running the same business, and I was able to chat with him over email. I mention one of his kind comments below.

The Apple II had just 48 KB of RAM, and no floppy disk drive—programs were saved to and reloaded from audio cassettes, if you had a tape recorder handy to connect to the computer with an audio cable—but I finally had my own computer.

Bitmapped graphics caught my attention immediately (it even had six different colors!). After exhausting what I could do with the very slow graphics functionality contained in Applesoft BASIC (I think my first graphics program on a school Apple II computer in early 1982—the earliest chance I had to use a computer—was a graph of “biorhythms,” which seemed quite advanced to a 15-year-old kid who didn’t even know what a sine function was), I moved on to machine code, even building a simple but visually accurate emulation of one part of Pac-Man. (Without sounds.)

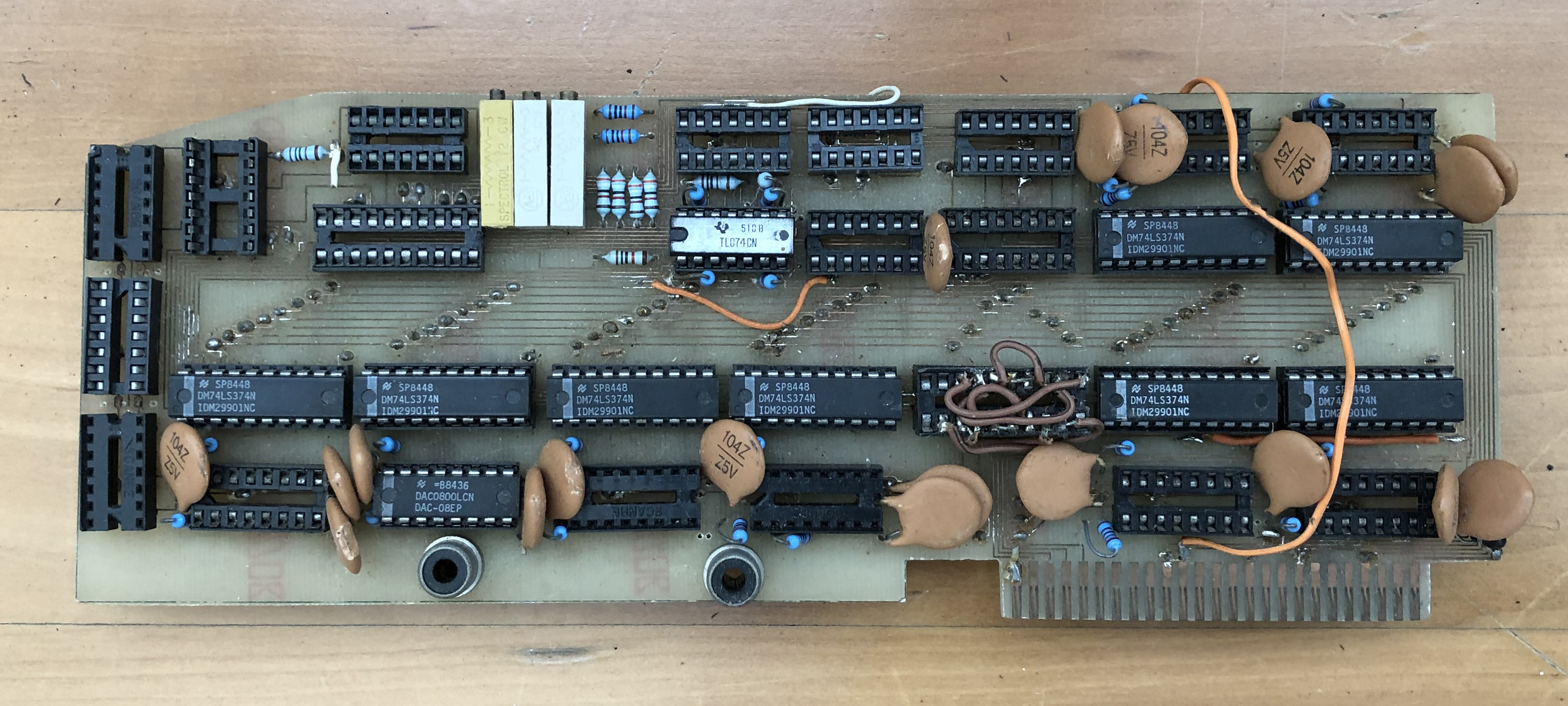

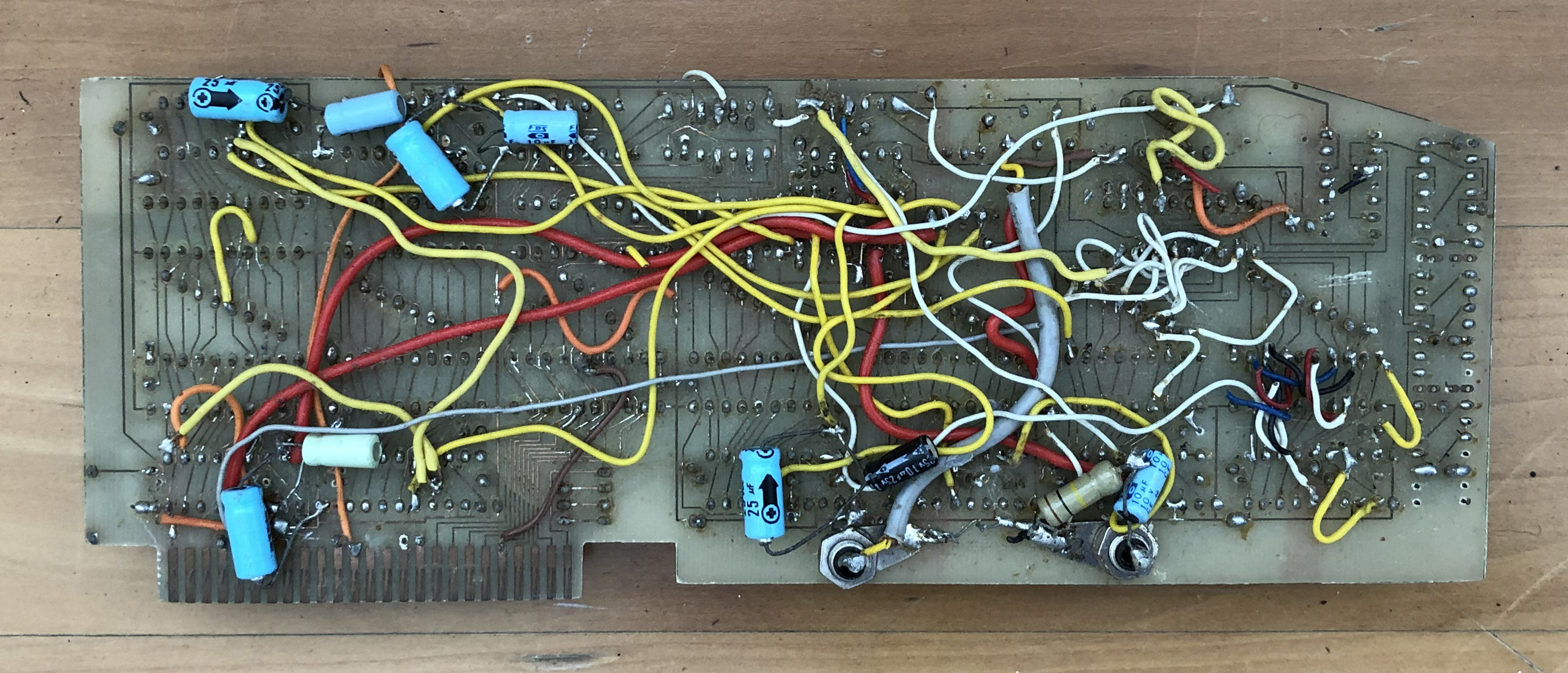

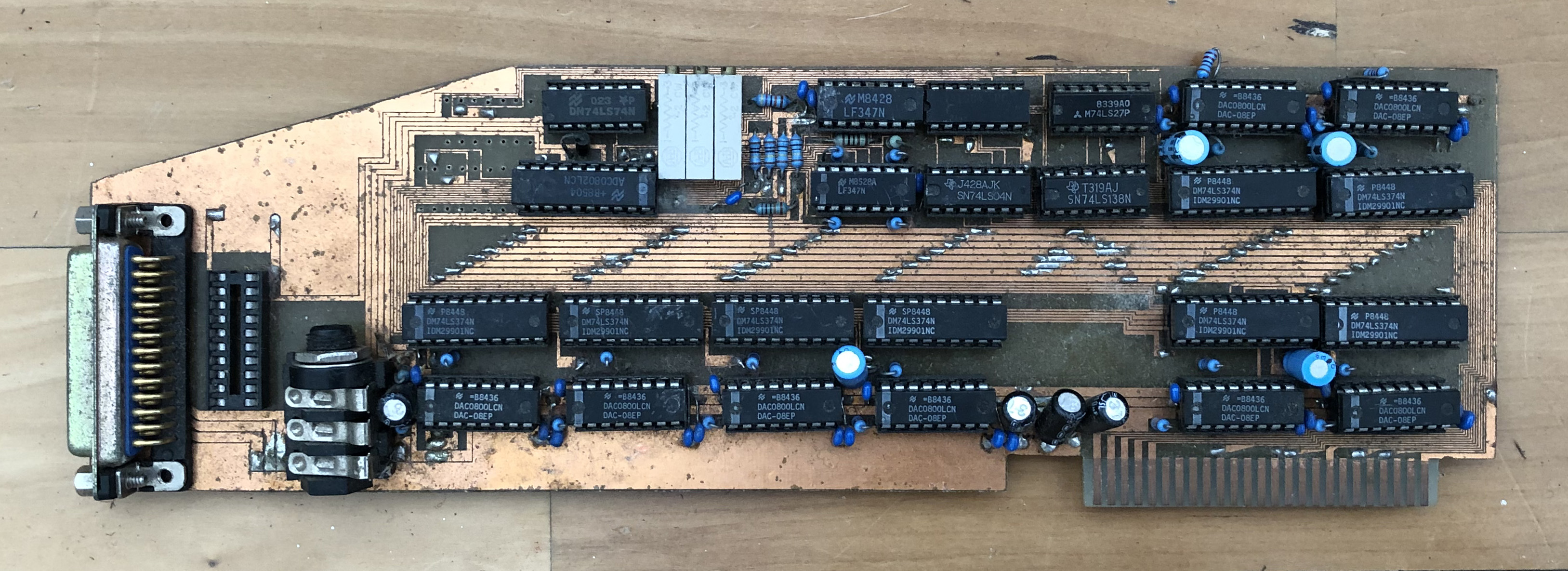

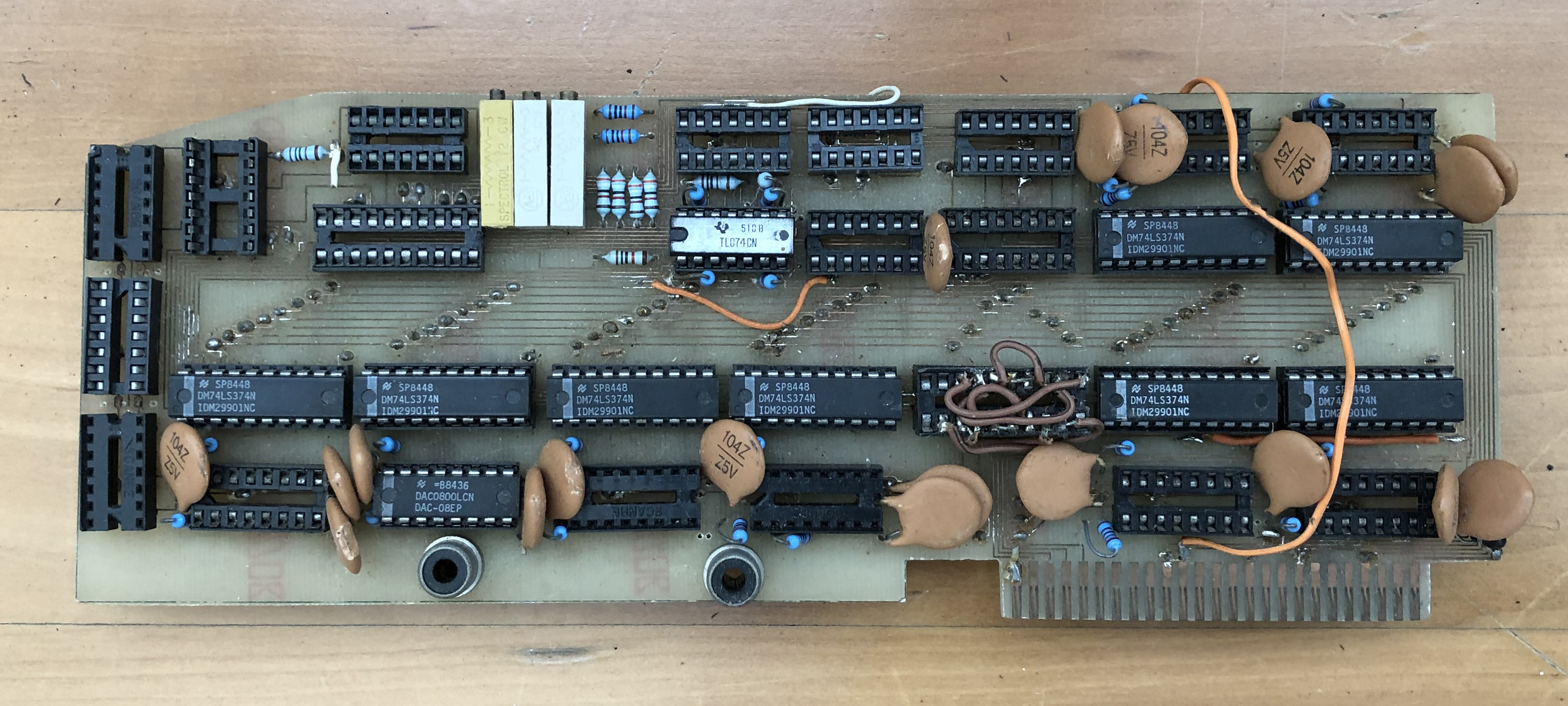

By 1985 I had dropped out of a Science/Engineering double degree at Monash University (before the “census date,” so officially I never went there; having been expelled from a school in 1981 had reduced my sensitivity to such life-changing decisions). I had, by that time, climbed to part-time management rank at McDonald’s, and I was building my own interface cards for my Apple II, making use of those graphics programming skills to create the circuit board layouts, which I would print out at larger scale on a dot-matrix printer, have photographically reduced at a photo store to transparencies at the final size, and then expose (using a 240 V ultra-violet fluorescent light mounted in a beer cooler!) onto the circuit board blank before exposing it to the chemicals needed to etch away the copper. In later years I discounted the simple LAN interface boxes I built utilizing the joystick port (!) of the Apple II, but in 2025 Ed Jozis had such kind words about that invention (which I co-branded with Micronica Australia, some of which he actually sold to customers) that I must memorialize it here. I was actually prouder and more excited by the interface cards (actually plugged into the motherboard of the Apple II) that I created to digitally sample and play back snippets of music:

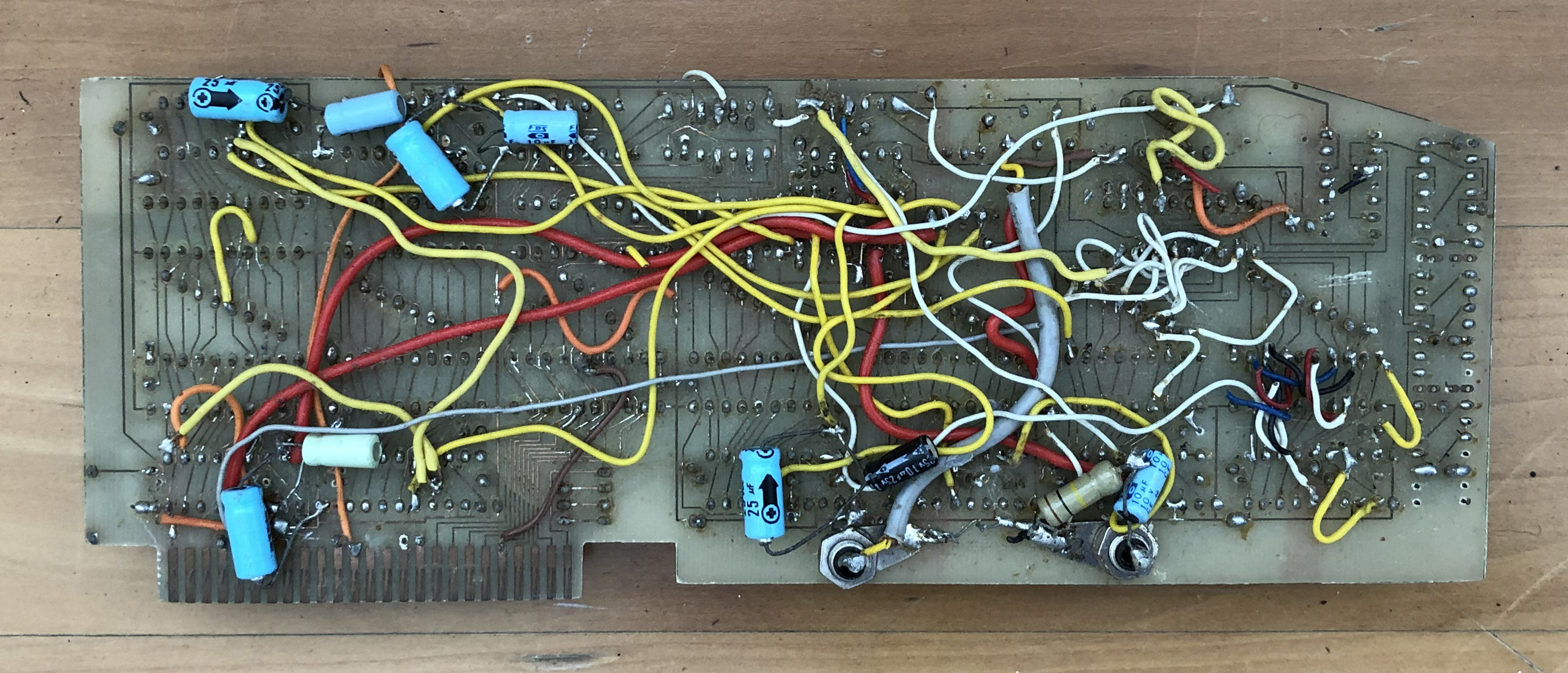

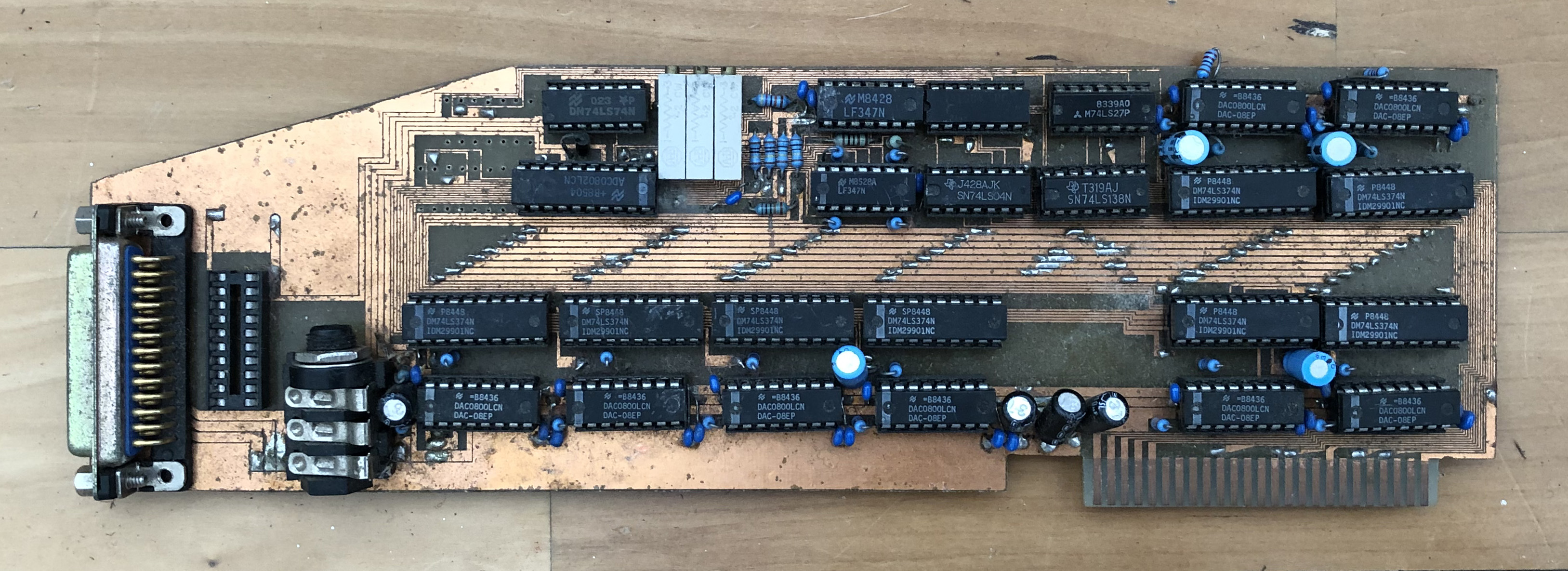

Some of the interface boards I designed and built in 1985

(Photographed decades later, by which time the unprotected copper had been attacked by the elements.) With the custom hardware, I was able to get the Apple II’s 1 MHz 6502 processor collecting samples in a loop that, from memory, took about 60 machine cycles. That meant that it was sampling at about 16 kHz—amazing quality for a home hobby project at the time; in the same league as, but not quite, the 44.1 kHz of the latest hotness in personal audio technology that was just coming out, the Compact Disc player. Of course, my Apple’s by-then 64 KB of RAM could only hold a few seconds of samples—but that was fine by me, since I wanted it for my latest hobby, which a drummer colleague of mine at McDonald’s, Andy Costello (no relation, obviously), had got me hooked on, and capturing samples from my drum kit—and building my own digital drums—was all I wanted it for.

It all worked well, but I found that the samples of the high hat were distorted. But I couldn’t find anything wrong with my programming, nor the hardware. Finally I caught the tram back down to the library at the Royal Melbourne Institute of Technology (where I’d first found the handbooks for those 74LS chips you see on those boards—remember, no Internet), and after a lot of reading found out that something called “aliasing” was probably the culprit, and I needed to add “low-pass filters” before the analog-to-digital sampling chips. (That probably explains all those electrolytic capacitors and resistors on the prototype on the left.) It partially fixed the problem, but it also made me realize that I had probably reached the limit of amateur electronics, and that there seemed to be a lot of theory and physics behind how these devices worked.

My cousin Stephen liked this digital drum machine so much (I can still hear his compositions of Phil Collins’ Sussudio drums in my head, even today) that four years later he bought the computer and the final iteration of the digital sampling card off me for his own use. But I am getting ahead of myself.

My interest in pursuing electronics spurred me to look into the Control Data courses that I had seen advertised on television: something like six thousand dollars for a ten-week course which would guarantee me a job fixing computers. I didn’t have that sort of money, of course, but I went to the State Trustees who managed the small trust fund my Dad had left me in his will, that had about that much left in it. I wasn’t supposed to get any of it until I was 25, but they had authorized amounts to my Mum for my school education, and I figured that this was another reasonable educational expense.

The trustee gave me probably the best advice I ever received: why pay all those thousands for a quickie course, when I could just apply to restart my university studies in 1986 in an Electrical Engineering degree at the University of Melbourne—which cost nothing at all—and do it properly?

I caught the tram from the city up to the University, walked in the Grattan Street gate to the Faculty of Engineering, and fell in love with the place immediately.

By December 1989, three summers of interning at IBM during that degree had allowed me to save enough money to follow the trend of my university peers in moving away from the Apple II to IBM, and upgrade to (what else?) an IBM PC (actually a genuine PS/2 Model 50—no clone this time around). I now had the opportunity to use VGA graphics—space-age science fiction compared to the graphics of the Apple II. I decided to move from the machine code of the Apple II to a new language that I had learned the previous year in my Engineering degree, which was close enough to machine code that it was in many cases just as fast, but which worked across different machines and microprocessors—and had just been standardized by the American National Standards Institute: C. I even went to a computer shop and bought a copy of Microsoft QuickC—the first time that I had paid money for software.

Having now spent money on software, I soon thought up an idea for making money from this new technology, leveraging my growing proficiency with computer graphics (something I needed to do, as I had perhaps foolishly gotten the bug for theoretical physics, starting the process of doing a Ph.D. in the field, which basically eliminated my chance of earning any real money for the foreseeable future, and ending my prospects for any more internships at IBM). My mother had for decades done cross-stitch embroideries, buying the printed “patterns” for them from handcraft stores, and I knew that there was a small but dedicated cadre of embroiderers who did likewise. I had also had the chance, while interning at IBM, to use a new device that would scan a piece of paper and convert it to a digital bitmapped image on a computer: a “scanner.” By 1990, HP had just released the first color scanner cheap enough for the domestic market, and laser printers were also becoming cheap enough to not just be the domain of huge companies. Could I write a program that scanned photographs and converted them to laser-printed cross-stitch design patterns?

Before laying out any hard cash for a color scanner, I decided to try out the process on a test image that was on a floppy disk accompanying a book on computer graphics that I had bought. (There was no World Wide Web in 1990.) It worked: my mother actually stitched it (I still have it hanging on our wall):

The frog test image and my Mum’s stitching of it from the “pattern” (stitch-by-stitch instructions) that my program created

After talking to the folks who ran the embroidery shop where my mother bought her patterns and materials, it seemed likely that I could sell such a service for $50 per design. I did a quick break-even analysis, and decided to make the investment in the HP scanner.

And in the years to follow, “Costella Design” (just a name, not a real company) did recoup that investment, and then some. (I had to redo a couple of designs that had suffered from my colorblindness—one part of the process, reducing the number of different-colored cottons required, was semi-manual—but I always ended up with satisfied customers, and never had to issue any refunds.)

My C graphics programming moved on to visual simulations of the movement and spinning of electrons, using new equations of motion I was deriving in my Ph.D. studies (I still have a VHS videotape of a simulation I showed in one presentation).

In early 1994, with government funding running out for me to complete my Ph.D. thesis by June 30, I estimated that it would take me at least another year or two to finish the algebraic calculations. So I wrote C programs to perform the algebra for me—and then wrote some more to actually write the appendixes to my Ph.D. thesis. (My offer to post a floppy disk with the C code on it to anyone who emails a request for it is still, I think, enforceable—I do still have the code, but I no longer have any devices capable of reading or writing floppy disks, so I might have to email you an archive.)

By 1997, my postdoctoral research position in theoretical particle physics had come to an end, and to make ends meet I enrolled in a teaching qualification and started teaching mathematics at a high school in Melbourne. I stated up-front to the Headmaster at Mentone Grammar who hired me that I only planned to be a teacher “for a few years.” (It ultimately stretched to nine years.)

To keep myself sane while trying to convince teenage brats that mathematics was something they needed to learn, I kept doing my research in theoretical physics, writing C programs to explore various ideas.

In the summer school vacation of 2000 I got an idea for deblurring images, inspired by some light reading that I had borrowed from the local Narre Warren Public Library, that the young brother of a former girlfriend had shown me seven years earlier: a coffee-table book about the JFK assassination, full of photographs of the event. That led to a four-year hobby of studying the photographic evidence of the assassination, writing increasingly sophisticated image processing code in C to sort through competing theories, which ultimately (and unexpectedly) made me one of the world’s experts on the Zapruder film of the assassination. This image processing work was critical for what follows. (But it did have a down-side: in 2011, I failed to secure a position at Google New York after on-site interviews; the recruiter told me that my engineering skills were great, but “some of the hiring committee were spooked by your work on conspiracy theories.” I guess that’s an example of two steps forward, one step back.)

In 2006 I finally escaped from high-school teaching, securing a reliability analysis position at the Defence Materiel Organisation of the Australian Department of Defence. (Yes, when I’m an Australian, we spell those words that way.) The work was engaging, but as a public servant I still had my spare time free to work on hobbies. I was considering putting together a new “High Definition” version of the stabilized Zapruder film, improving on the reference set of frames that I had put out in 2002, because an increasing number of videos were appearing on a new video site called “YouTube” that were using my version of the frames—and suddenly the fruits of my labors were being seen not just by hundreds of assassination researchers, but by tens and then hundreds of thousands of ordinary folks surfing the Internet.

As part of the work for preparing for that new version of the frames, I was looking in more detail at the primary source material: the 1997 DVD Image of an Assassination released by MPI, which (formally) fulfilled one of the requirements of the President John F. Kennedy Assassination Records Collection Act of 1992: to make a reference copy of the Zapruder film available to the general public. I noted that, as the frames of this DVD were compressed with MPEG-2 (as most DVDs are), the individual frames, when examined closely, contain a very slight amount of the same “blocky” artifacts that can be seen in over-compressed JPEG images. For example, consider this portion of frame 188 (enlarged by a factor of two to make it easier to view):

Portion of Frame 188 of the Zapruder film, enlarged

If we apply a signficant amount of sharpening to the original image,

Portion of Frame 188 of the Zapruder film, sharpened and enlarged

we can faintly see vertical and horizontal lines that represent these block boundaries. Contemplating this issue, I thought of a way to remove these block boundary artifacts as much as possible, without destroying the true details of the image. I developed this into the UnBlock algorithm, which does about the best job possible on a single image, without using any information not contained in the image itself.

To process JPEG files for the UnBlock code, in C, I needed to make use of the popular JPEG library, written in C, created by the Independent JPEG Group (IJG). (That library is today the standard reference library for JPEG provided on most operating systems.)

And buried within that library I found something that was immensely curious.

© 2021–2025 John Costella